Introduction: The Invisible Engine Behind AI

In today’s rapidly evolving digital landscape, technologies like Artificial Intelligence (AI), Machine

Learning (ML), Deep Learning (DL), and Natural Language Processing (NLP) are often

celebrated as groundbreaking innovations transforming industries. From personalized

recommendations on e-commerce platforms to intelligent virtual assistants and autonomous

vehicles, these systems appear almost magical in their ability to mimic human intelligence.

However, what often remains unseen is the powerful force working silently behind the

scenes—Statistics.

Statistics is not merely a supplementary tool in the world of AI; it is the very backbone that

makes intelligent systems possible. At its core, AI is about learning from data, and statistics

provides the mathematical framework to understand, analyze, and interpret that data effectively.

Without statistical principles, machines would lack the ability to recognize patterns, quantify

uncertainty, or make informed decisions based on incomplete or noisy information.

Every stage of an AI system—from data collection and preprocessing to model building,

evaluation, and optimization—relies heavily on statistical concepts. Techniques such as

probability distributions, hypothesis testing, regression analysis, and Bayesian inference enable

machines to draw meaningful insights from vast datasets. These methods help models not only

learn from historical data but also generalize their knowledge to new, unseen scenarios.

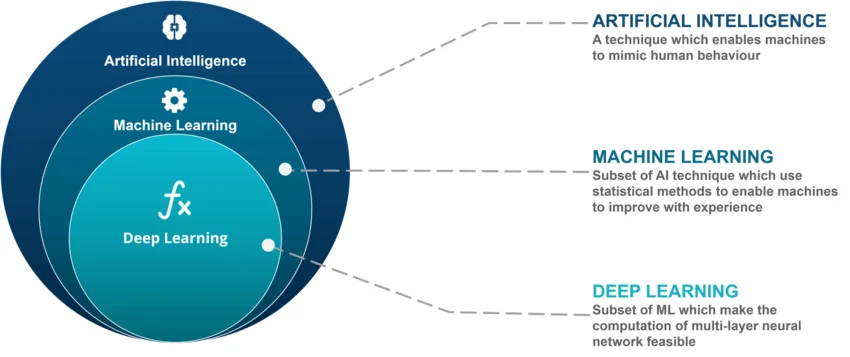

In Machine Learning, for instance, algorithms are designed to identify patterns within data and

make predictions. This process is fundamentally statistical in nature. Deep Learning, a subset of

ML, uses neural networks that are trained through optimization techniques grounded in

statistical theory. Similarly, Natural Language Processing leverages probabilistic models to

understand and generate human language with increasing accuracy.

Moreover, statistics plays a crucial role in handling uncertainty and variability—two inherent

characteristics of real-world data. Whether it’s predicting customer behavior, detecting

fraudulent transactions, or enabling self-driving cars to make split-second decisions, statistical

models ensure that these systems can operate reliably even in unpredictable environments.

In essence, statistics acts as the invisible engine that powers AI technologies. It transforms raw

data into actionable intelligence, enabling machines to learn, adapt, and improve over time. As

AI continues to advance and integrate deeper into our daily lives, the importance of statistics will

only grow stronger—quietly but fundamentally driving the intelligence behind every smart

system we interact with.

Understanding the Role of Statistics in AI and Machine Learning

At its core, Machine Learning is fundamentally about learning from data—and this is precisely

where statistics plays a central role. It provides the mathematical and conceptual framework

needed to interpret data, extract meaningful insights, and build models that can make informed

decisions. Without statistics, data would remain just raw numbers, lacking context or direction.

Key statistical concepts such as probability distributions, hypothesis testing, regression analysis,

and Bayesian inference form the foundation of modern AI systems. These concepts enable

machines to not only understand patterns within data but also to quantify uncertainty, validate

assumptions, and continuously refine their predictions.

Statistics empowers AI and Machine Learning in several critical ways:

● Identifying patterns and relationships in data:

Statistical techniques help uncover hidden structures and correlations within large

datasets, allowing models to detect trends that may not be immediately visible.

● Making predictions based on historical information:

By analyzing past data, statistical models can forecast future outcomes, which is

essential for applications like demand forecasting, recommendation systems, and risk

assessment.

● Measuring uncertainty and model performance:

Statistics provides tools to evaluate how confident a model’s predictions are and how

well it performs. Metrics such as accuracy, precision, recall, and confidence intervals

help ensure reliability.

● Avoiding overfitting and improving generalization:

One of the biggest challenges in Machine Learning is ensuring that models perform well

not just on training data but also on unseen data. Statistical methods like

cross-validation and regularization help strike this balance.

In essence, statistics acts as the guiding force that ensures AI models are not only intelligent but

also accurate, reliable, and scalable. Without statistical reasoning, these systems would

struggle to make sense of data, leading to poor performance and limited real-world applicability

Deep Learning and Statistical Foundations

Deep Learning, a powerful subset of Machine Learning, is often associated with complex neural

networks and advanced computational capabilities. However, beneath this complexity lies a

strong statistical foundation that drives how these models learn and improve. At its core, Deep

Learning is not just about layers and neurons—it is about optimizing decisions based on data,

guided by statistical principles.

Neural networks learn by continuously adjusting their internal parameters, known as weights, to

minimize errors in predictions. This learning process is governed by statistical optimization

techniques such as loss functions and gradient descent. These methods help the model

evaluate how far its predictions are from actual outcomes and determine the best way to

improve.

Every key component of a neural network can be understood through a statistical lens:

● Loss functions measure error using probability-based metrics:

Loss functions quantify the difference between predicted and actual values. Many of

these functions, such as cross-entropy loss, are rooted in probability theory and help the

model assess how well it is performing.

● Activation functions transform inputs based on mathematical distributions:

Activation functions introduce non-linearity into the model, enabling it to learn complex

patterns. Functions like sigmoid and softmax have direct interpretations in probability,

often mapping outputs to likelihood values.

● Optimization algorithms use statistical methods to find the best model

parameters:

Algorithms like gradient descent and its variants iteratively adjust weights to minimize

loss. These methods rely on statistical concepts to efficiently navigate large parameter

spaces and converge toward optimal solutions.

● Regularization techniques control model complexity:

Methods such as dropout and L2 regularization are grounded in statistical reasoning

and help prevent overfitting by ensuring the model generalizes well to new data.

Despite its reputation for complexity, the learning mechanism of Deep Learning is deeply rooted

in statistics. It is this statistical backbone that allows neural networks to process vast amounts of

data, recognize intricate patterns, and deliver highly accurate predictions. In essence, statistics

provides the logic and discipline that transforms Deep Learning from a black-box system into a

structured and reliable approach to artificial intelligence.

Natural Language Processing and Statistical Evolution

Natural Language Processing (NLP) has undergone a remarkable transformation over the

years, evolving from simple rule-based systems to highly sophisticated, AI-driven models

capable of understanding and generating human-like language. Despite these advancements,

one element has remained constant throughout this journey—the foundational role of

statistics.

In its early stages, NLP relied heavily on traditional statistical models. Techniques such as

n-grams and Hidden Markov Models (HMMs) were widely used to analyze language patterns

based purely on probabilities. These models focused on predicting the likelihood of word

sequences, enabling basic tasks like speech recognition, part-of-speech tagging, and simple

text generation. While limited in their capabilities, they laid the groundwork for modern NLP by

demonstrating how language could be modeled statistically.

As NLP evolved, it incorporated Machine Learning and, eventually, Deep Learning approaches.

Today’s advanced systems, including transformer-based architectures, may appear vastly

different from earlier models, but they are still deeply rooted in statistical principles. The shift has

been from simpler probability models to more complex, data-driven representations—but the

core idea of learning from patterns in data remains unchanged.

Modern NLP systems depend on statistics in several key areas:

● Language modeling and probability prediction:

At the heart of NLP is the ability to predict the likelihood of word sequences. Statistical

methods help models determine which words are most likely to appear next in a

sentence, forming the basis of text generation and auto-completion.

● Text classification and sentiment analysis:

Statistical techniques enable models to categorize text (such as spam detection or topic

classification) and analyze sentiment by identifying patterns in word usage and context.

● Named Entity Recognition (NER) and machine translation:

Identifying names, locations, and organizations within text, as well as translating

between languages, relies on probabilistic models that capture linguistic structures and

relationships.

● Contextual understanding in advanced models:

Even in transformer-based systems, attention mechanisms and embeddings are trained

using statistical optimization, allowing models to capture context and meaning more

effectively.

Importantly, even the most advanced language models operate by leveraging probability

distributions. They predict the next word (or token) in a sequence based on learned patterns

from massive datasets. This probabilistic approach is what enables them to generate coherent,

context-aware, and human-like responses.

In essence, while NLP has evolved technologically, its core remains statistical. From early

probabilistic models to modern deep learning architectures, statistics continues to serve as the

driving force behind language understanding, ensuring that machines can interpret, process,

and generate human language with increasing accuracy and sophistication.

Technology Integration: From Data to Intelligent Systems

The true power of Artificial Intelligence emerges when statistical methods are seamlessly

integrated with modern AI technologies to transform raw data into intelligent, decision-making

systems. This integration forms a complete pipeline—starting from data collection and

preprocessing to model development and deployment—where statistics plays a critical role at

every stage.

Before any Machine Learning model can function effectively, data must first be gathered,

cleaned, and structured. Statistical techniques are essential in this phase, helping to handle

missing values, detect anomalies, and ensure data quality. Once prepared, this data becomes

the foundation upon which intelligent models are built, enabling systems to learn patterns, make

predictions, and adapt over time.

This powerful combination of statistics and AI is clearly visible across a wide range of real-world

technologies:

● Predictive analytics in healthcare and finance:

Statistical models analyze historical and real-time data to forecast outcomes such as

disease risks, patient recovery rates, market trends, and financial risks—supporting

better decision-making and planning.

● Recommendation engines in e-commerce platforms:

By analyzing user behavior, preferences, and past interactions, statistical algorithms

help generate personalized product or content recommendations, enhancing user

experience and engagement.

● Computer vision in security and automation:

From facial recognition to object detection, statistical methods enable machines to

interpret visual data accurately, making applications like surveillance systems and

automated manufacturing more reliable.

● Conversational AI in customer support:

Chatbots and virtual assistants use statistical language models to understand user

queries, predict responses, and deliver human-like interactions, improving efficiency and

customer satisfaction.

What makes this integration truly impactful is the role of statistics in ensuring accuracy,

reliability, and efficiency. It allows systems to measure performance, manage uncertainty, and

continuously improve through feedback and new data.

In essence, statistics acts as the bridge that connects raw data to intelligent action. Without it, AI

technologies would lack the precision and adaptability required for real-world applications. With

it, they become powerful tools capable of transforming industries and enhancing everyday

experiences.

Several key market trends highlight this widespread adoption:

● Increased adoption of AI in small and medium enterprises (SMEs):

Earlier limited to large corporations, AI technologies are now becoming more accessible

to SMEs due to cloud computing and cost-effective tools. This democratization is

enabling smaller businesses to leverage data for growth and innovation.

● Growth of AI-powered analytics tools:

Advanced analytics platforms powered by AI are helping organizations move beyond

traditional reporting to predictive and prescriptive insights, allowing for smarter and faster

decision-making.

● Rising demand for data-driven decision-making:

Companies are increasingly relying on data rather than intuition. Statistical models play

a crucial role in analyzing trends, forecasting outcomes, and minimizing risks in business

operations.

● Expansion of NLP-based applications like chatbots and virtual assistants:

Businesses are adopting conversational AI to enhance customer experience, automate

support services, and streamline communication, leading to improved engagement and

reduced operational costs.

What binds all these trends together is the foundational role of statistics. It provides the

analytical backbone that ensures AI systems are not only functional but also reliable, scalable,

and accurate. From validating models to measuring performance and handling uncertainty,

statistical techniques make it possible for AI to deliver real-world value.

In conclusion, as the AI market continues to expand, the importance of statistics becomes even

more pronounced. It is this invisible yet essential component that enables industries to harness

the full potential of AI, driving innovation and shaping the future of intelligent technologies.

Future Growth and Opportunities

The future of Artificial Intelligence (AI), Machine Learning (ML), and Natural Language

Processing (NLP) is deeply intertwined with the continued evolution of statistical methods. As

the volume, variety, and velocity of data increase, traditional approaches alone will no longer be

sufficient. The next wave of innovation will depend on more advanced, scalable, and

interpretable statistical techniques that can handle complex, real-world data environments.

As AI systems become more embedded in critical sectors such as healthcare, finance,

governance, and education, the demand for accuracy, transparency, and accountability will rise

significantly. This is where statistics will play an even more crucial role—ensuring that models

are not only powerful but also trustworthy and explainable.

Several key opportunities are shaping the future of this domain:

● Development of more explainable AI systems using statistical models:

One of the biggest challenges in modern AI is the “black box” nature of complex models.

Statistical techniques are being used to make AI decisions more interpretable, helping

stakeholders understand how and why a model arrives at a particular outcome.

● Integration of AI with real-time data analytics:

The future lies in systems that can process and analyze data in real time. Statistical

methods will be essential in handling streaming data, detecting patterns instantly, and

enabling faster, data-driven decisions.

● Growth in personalized AI applications across industries:

From personalized healthcare treatments to customized shopping experiences, AI

systems will increasingly rely on statistical models to understand individual behavior and

preferences, delivering highly tailored solutions.

● Increased focus on ethical AI and bias reduction using statistical analysis:

As AI adoption grows, concerns around fairness, bias, and ethics are becoming more

prominent. Statistical tools are critical in identifying biases in data and models, ensuring

that AI systems operate in a fair and responsible manner.

Looking ahead, the synergy between AI technologies and statistics will open up vast

opportunities for innovation and career growth. Organizations are actively seeking professionals

who not only understand AI algorithms but also possess strong statistical expertise to build

systems that are reliable, scalable, and transparent.

In essence, statistics will continue to be the guiding force that shapes the future of intelligent

systems—enabling AI to evolve from powerful tools into responsible, human-centric

technologies that can drive meaningful impact across industries.

Challenges and Limitations

While statistics forms the backbone of AI and Machine Learning, its application is not without

challenges. The effectiveness of any AI system heavily depends on the quality of data and the

correctness of the statistical methods applied. If these foundations are weak, even the most

advanced models can produce misleading or unreliable results.

One of the primary concerns is that real-world data is often messy, incomplete, or biased.

Statistical models rely on assumptions about data distribution and structure, and when these

assumptions are violated, the outcomes can be significantly affected. As AI systems are

increasingly used in high-stakes environments, these limitations become even more critical.

Some of the most common challenges include:

● Data imbalance and sampling bias:

When certain classes or categories are underrepresented in a dataset, models may

become biased toward the majority class. This can lead to unfair or inaccurate

predictions, especially in applications like fraud detection or medical diagnosis.

● Overfitting due to improper model evaluation:

Overfitting occurs when a model performs well on training data but fails to generalize to

new, unseen data. This often results from inadequate validation techniques or excessive

model complexity, highlighting the need for robust statistical evaluation methods.

● Lack of interpretability in complex models:

Advanced models, particularly in Deep Learning, can act as “black boxes,” making it

difficult to understand how decisions are made. This lack of transparency poses

challenges in trust, accountability, and regulatory compliance.

● Difficulty in handling unstructured data:

Data such as text, images, and audio does not follow traditional structured formats,

making statistical analysis more complex. Specialized techniques are required to extract

meaningful patterns from such data.

● Incorrect statistical assumptions:

Applying models with incorrect assumptions about data distribution or relationships can

lead to flawed conclusions and poor decision-making.

Addressing these challenges requires more than just technical implementation—it demands a

deep understanding of statistical principles combined with advanced AI techniques. By

improving data quality, applying appropriate models, and ensuring rigorous evaluation,

organizations can build AI systems that are not only powerful but also reliable, fair, and

trustworthy.

Conclusion: Statistics as the Foundation of Intelligent Innovation

Artificial Intelligence and its related technologies are undeniably reshaping the way businesses

operate and societies function. From automation and predictive analytics to intelligent

decision-making systems, AI has become a driving force of modern innovation. However,

behind this technological revolution lies a critical enabler—statistics.

Statistics provides the essential mathematical and analytical framework that allows machines to

learn from data, recognize patterns, and make informed decisions. It transforms raw information

into actionable insights, ensuring that AI systems are not just powerful, but also meaningful and

reliable in real-world applications.

The success of AI does not depend solely on advanced algorithms or computational power; it

relies heavily on how effectively data is analyzed and interpreted. Statistical methods ensure

that models are accurate, results are validated, and uncertainties are properly managed. This

foundation is what enables AI systems to adapt, improve, and deliver consistent performance

across diverse scenarios.

As technology continues to evolve and data becomes increasingly complex, the importance of

statistics will only grow stronger. It will play a vital role in addressing challenges such as model

transparency, ethical concerns, and scalability, ensuring that AI systems remain efficient, fair,

and trustworthy.

For individuals and organizations aiming to build a future in AI and data science, understanding

the connection between statistics and intelligent systems is not optional—it is essential. Those

who can bridge the gap between statistical thinking and technological implementation will be

best positioned to drive innovation and create impactful, data-driven solutions in the years to

come.

How Pinaki IT Hub Can Help You Build a Future in AI,

Machine Learning, and Data Science

As the demand for Artificial Intelligence, Machine Learning, Deep Learning, and NLP continues

to grow, the need for practical knowledge and industry-relevant skills has become more

important than ever. Learning these technologies is not just about understanding theory; it

requires hands-on experience, real-world exposure, and continuous guidance to stay aligned

with industry expectations.

This is where Pinaki IT Hub Blogs and its ecosystem play a significant role. Pinaki IT Hub is

focused on bridging the gap between academic learning and real industry requirements by

offering a combination of technical training, mentorship, and practical project-based learning.

One of the key advantages of learning through Pinaki is its focus on real-world application.

Instead of limiting learners to theoretical concepts, the platform emphasizes practical exposure

through live projects, helping individuals understand how technologies like AI, Machine

Learning, and Data Analytics are actually used in industries. This approach ensures that

learners are not only knowledgeable but also job-ready.

Additionally, Pinaki IT Hub provides structured learning with mentorship from experienced

professionals. This guidance helps learners navigate complex topics such as statistical

modeling, data analysis, and algorithm development with clarity and confidence. Regular

mentorship and support systems ensure continuous improvement and career direction.

Another important aspect is career support and upskilling. In a rapidly evolving tech landscape,

continuous learning is essential to remain relevant. Pinaki focuses on industry-oriented training

programs in areas like Artificial Intelligence, Machine Learning, Data Science, and emerging

technologies, enabling learners to stay ahead in the competitive job market.

For businesses, Pinaki IT Hub offers technology-driven solutions that combine software,

analytics, and strategic implementation to improve efficiency and decision-making. By

integrating statistical approaches with modern AI technologies, organizations can build smarter

systems and achieve sustainable growth.

In conclusion, whether you are a student, a working professional, or a business owner, building

expertise in AI and statistics requires the right guidance, tools, and practical exposure.

Platforms like Pinaki IT Hub provide a structured pathway to not only learn these technologies

but also apply them effectively in real-world scenarios, making you future-ready in the digital

economy.